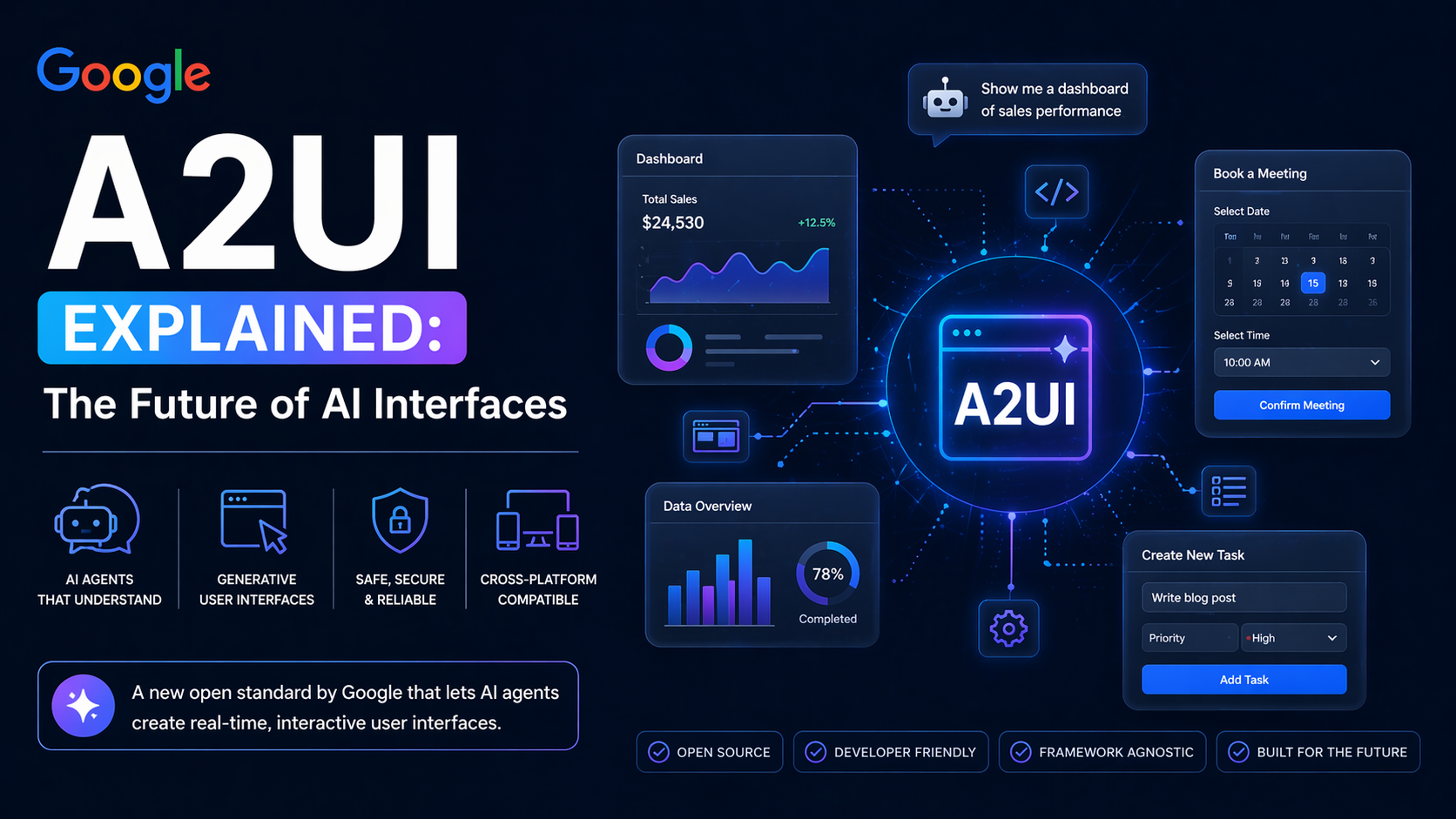

Google A2UI Explained: The Future of AI Interfaces

Here's something that's been quietly bothering me about AI assistants for a while. You ask one to help you book a restaurant. It asks you what day. You reply. It asks what time. You reply again. Then it asks for party size. Three or four back-and-forths for what could've just been a small form with three fields and a submit button. It's like watching someone read you an HTML page out loud instead of just showing it to you.

Google apparently got annoyed about this too. In December 2025, they open-sourced something called A2UI: Agent-to-User Interface and it's one of those things that feels obvious in hindsight but is actually a genuinely new idea.

So What Is A2UI, Actually?

A2UI is an open standard that lets agents describe rich native interfaces in a declarative JSON format, while client applications render them using their own components. That's the technical definition. Here's the human one: instead of your AI assistant typing out a long text response, it can now send something that looks and behaves like a real UI: a form, a chart, a booking widget, a dashboard rendered natively in whatever app you're using.

The key word there is natively. The agent isn't sending you HTML or JavaScript. It outputs a JSON payload that describes a set of components, their properties, and a data model. The client reads this and maps each component to its own native widgets - Angular components, Flutter widgets, web components, React components, or SwiftUI views.

Which means the interface actually looks like it belongs in the app. It's not some weird iframe bolted on. It matches the design system. It feels right.

Why This Is a Harder Problem Than It Sounds

Multi-agent systems are where this gets genuinely complicated. Imagine an AI orchestrator in one company delegating a task to a remote agent from a completely different organization. That remote agent can't touch your app's front end directly. Historically that meant HTML or script inside an iframe - heavy, often visually inconsistent with the host, and risky from a security standpoint.

A2UI sidesteps this whole mess. Agents send a declarative JSON format describing the intent of the UI. The client application then renders this using its own native component library. The agent describes what the UI should be. The app decides how to render it. That separation is doing a lot of work.

Security-wise, it's a meaningful improvement too. A2UI is a declarative data format, not executable code. The client maintains a catalog of trusted components like Card, Button, TextField. The agent can only reference types in that catalog. So there's no arbitrary script execution from model output. The agent can't inject something malicious because it literally doesn't have the ability to send executable code, just structured descriptions that the client knows how to handle.

The Interaction Change That Actually Matters

This part I find genuinely exciting. Most AI interactions right now are optimized around language - question, text answer, follow-up question. That made sense when language was all we had. But a lot of tasks aren't really language tasks. Booking something, filtering a dataset, filling out a form, reviewing a report, these are interface tasks. Forcing them through a chat box is just friction.

With A2UI, agents generate complete forms with date pickers, time selectors, and submit buttons. Users interact with native UI components, not endless text exchanges. For people who've spent years watching AI assistants ask five questions when one form would've done the job, that's a relief.

And it streams. A2UI supports incrementally parsing and rendering UI components as they're being generated - no waiting for the full JSON block. The interface builds in front of you rather than appearing all at once after a delay. Small thing, but it feels noticeably better.

Who's Already Using It

Google's Opal team which lets hundreds of thousands of people build and share AI mini-apps using natural language has been a core contributor to A2UI, and the team has been using it to rapidly prototype and integrate it into the core app-building flow.

There's also a personal health companion app built by a Flutter team that uses A2UI to replace static dashboards with dynamically generated health widgets. Rather than forcing users to dig through sub-menus, a central LLM-powered chat can dynamically generate UI widgets on the fly, surfacing critical lab results, vaccine expirations, or clinic locations based on immediate context. That's not a demo, that's a real product solving a real problem.

CopilotKit built compatibility on day one. Flutter's GenUI SDK uses A2UI under the covers already. Renderers exist for Flutter, Lit, Angular, and React. It's early, but the ecosystem is moving.

Where It Currently Stands

A2UI is currently at v0.8 (now v0.9 in recent releases), described as a public preview functional, but still evolving. This isn't production-ready for everything yet. The spec is still changing, some renderers are more mature than others, and there are rough edges if you go digging.

But honestly, for something that went public only a few months ago, the pace of development is fast. v0.9 brought a proper React renderer, a shared web-core library, an Agent SDK to simplify the server side, and client-defined functions for things like validation.

What It's Actually Pointing Toward

Here's my read on the bigger picture. The way most people interact with AI right now typing into a chat box, getting a text response, typing again is a temporary interface paradigm. It was the fastest thing to ship. It works well enough for enough things that nobody's pushed too hard to replace it.

A2UI is part of a slow, quiet shift toward AI that actually adapts its output to the task instead of flattening everything into the same conversational format. Sometimes text is the right answer. Sometimes a chart is. Sometimes a form is. Sometimes a map is. The interface should match the question.

That's what A2UI is building toward. And it's the first piece of infrastructure I've seen that could actually make it real, across platforms, without someone hand-coding every possible interaction in advance.

Worth paying attention to.

0 Comments Comments