Computer

The Race to Move AI Into Space Has Begun: get Ready 2026 Edition

In the fast-paced, AI-driven economy of 2026, the concept of "infrastructure" has been radically redefined. We live in an era where terrestrial data centers are hitting a hard physical limit. On Earth, the primary bottlenecks for the next generation of artificial intelligence are no longer just software or even chip design; they are power, cooling, and space. As AI models grow in complexity, their thirst for electricity has strained national grids to the breaking point, leading to a new, high-stakes competition: The Race for Orbital Compute.

The "Final Frontier" for AI is no longer a metaphor. Major tech giants like SpaceX, Google, and AWS are currently in a multi-billion dollar race to move AI data centers into space. From the Feb 2, 2026 merger of SpaceX and xAI to Google's secretive Project Suncatcher, the shift from "Earth-bound" to "Orbital-first" AI development is well underway. In this high-tech landscape, where 24/7 solar energy is abundant and the vacuum of space offers unique cooling potential, the "secret" to the next leap in AI isn't just a new algorithm—it's a new location.

Frequently Asked Questions (FAQ)

External References and Resources

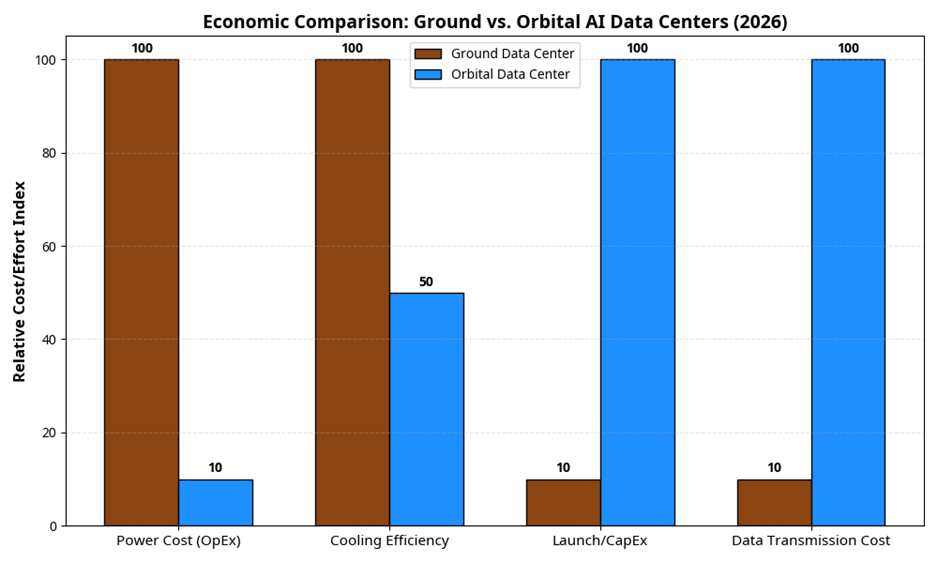

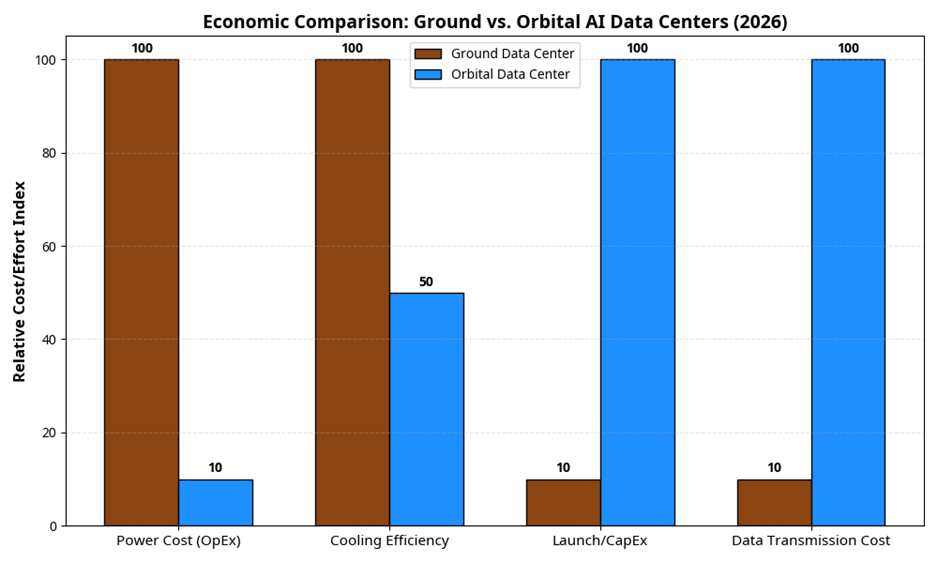

While the upfront cost (CapEx) of launching a data center is significantly higher than building one on Earth, the operational cost (OpEx) is dramatically lower due to free solar power. In the 2026 economy, where energy prices are a major driver of AI inflation, the "Energy Arbitrage" of space is becoming a powerful motivator. Elon Musk's prediction that space-based data centers will be the lowest cost within 2-3 years depends heavily on the continued success and scale of the Starship program, which has already reduced launch costs to under $100 per kilogram in 2026.

As we look toward 2027, the boundaries between these technologies will likely continue to blur. We may see the rise of "Orbital-Automators" or "Space-Based-Cyborgs." But regardless of the terminology, the core lesson remains: in the age of AI, the most important skill is the ability to decide how you will work with the machine. Your orbital strategy is your identity in the 2026 economy—choose it wisely.

External References and Resources

The "Final Frontier" for AI is no longer a metaphor. Major tech giants like SpaceX, Google, and AWS are currently in a multi-billion dollar race to move AI data centers into space. From the Feb 2, 2026 merger of SpaceX and xAI to Google's secretive Project Suncatcher, the shift from "Earth-bound" to "Orbital-first" AI development is well underway. In this high-tech landscape, where 24/7 solar energy is abundant and the vacuum of space offers unique cooling potential, the "secret" to the next leap in AI isn't just a new algorithm—it's a new location.

Table of Contents

- The Major Players: A Three-Way Race

- The "Why": Why Move AI to Space?

- The Technological Challenges: Radiation, Cooling, and Chips

- The Economics: Does the Math Add Up?

- Timelines: When Will This Happen?

- The Risks: Debris, Geopolitics, and Sustainability

Frequently Asked Questions (FAQ)

External References and Resources

The Major Players: A Three-Way Race

The race to move AI into space is being led by three distinct groups, each with a different strategic advantage in the 2026 orbital economy.1. SpaceX & xAI: The "Million-Satellite" Vision

The most significant development in the 2026 AI-in-space race occurred on February 2, 2026, when Elon Musk's SpaceX officially merged with his AI startup, xAI. Shortly after the merger, the new entity filed an unprecedented application with the Federal Communications Commission (FCC) for a "million-satellite" AI data center constellation. Leveraging the Starship launch system—which has now reached a flight cadence of twice per week—SpaceX aims to build the world's first truly orbital supercomputer. By placing thousands of high-performance GPUs in Low Earth Orbit (LEO), SpaceX intends to bypass the terrestrial power grid altogether, using Starlink's laser inter-satellite links (ISLs) to create a seamless, space-based neural network.2. Google: Project Suncatcher

Google's approach, known as Project Suncatcher, focuses on the energy problem. Announced in late 2025, Project Suncatcher aims to launch a network of massive, solar-powered satellites that serve as dedicated AI infrastructure hubs. Unlike SpaceX's LEO-focused strategy, Google is exploring higher orbits where solar exposure is nearly constant (99% of the year). By using high-efficiency solar arrays and advanced thermal radiators, Google hopes to create "Suncatcher Hubs" that can process massive amounts of data and beam the results back to Earth via optical (laser) communications. Google's first prototype launches are scheduled for 2027, making this a critical timeline to watch.3. AWS (Amazon): The Edge and the Kuiper Advantage

Amazon's strategy for AI in space is centered on Edge Computing. Through AWS Ground Station and the Project Kuiper satellite constellation, Amazon is focused on processing data at the source. For example, instead of sending raw hyperspectral imagery from an Earth observation satellite down to a ground station for processing, AWS's orbital edge compute can analyze the image in real-time, identifying a forest fire or a methane leak in seconds. Amazon has also been active in the legal arena, mounting challenges against SpaceX's "million-satellite" filing to protect orbital slots and frequency spectrum for its own AI-integrated Kuiper network.

The "Why": Why Move AI to Space?

To understand why tech giants are willing to spend billions on orbital data centers, we must look at the three primary drivers of the 2026 AI landscape: Power, Data Volume, and Latency.1. The Terrestrial Power Crisis

In 2026, a single AI training run for a trillion-parameter model can consume as much electricity as a small city. Terrestrial data centers are competing for the same power as residential homes and electric vehicle (EV) fleets, leading to skyrocketing energy costs and grid instability. In space, however, solar energy is infinite and 24/7. A satellite in the right orbit can receive direct sunlight nearly 100% of the time, providing a consistent, carbon-free power source that is decoupled from Earth's energy prices.2. The Data Volume Problem

We are currently generating more data in space than we can possibly send back to Earth. High-resolution Earth observation satellites generate petabytes of data every day. Sending all that raw data down to a ground station requires massive bandwidth and high costs. By moving the AI to the data (Edge AI), we can process the information in orbit and only send the "insight" back to Earth. This reduces the required bandwidth by a factor of 1,000 or more.3. Latency and Autonomous Operations

For applications like orbital debris tracking or autonomous satellite maneuvering, every millisecond counts. If a satellite has to send a sensor reading to Earth, wait for a ground-based AI to process it, and then receive a command back, the delay (latency) could be several seconds—too long to avoid a collision. Space-based AI allows for millisecond-level autonomous decision-making, which is essential for the crowded LEO environment of 2026.The Technological Challenges: Radiation, Cooling, and Chips

While the benefits are clear, the technological hurdles of moving AI to space are immense. In 2026, the industry has shifted its focus to three key areas: Radiation Tolerance, Thermal Management, and Specialized Chips.1. Radiation: From "Hardened" to "Tolerant"

Space is a harsh environment filled with high-energy particles that can flip bits in a computer's memory (Single Event Upsets) or permanently damage silicon. Historically, "radiation-hardened" chips were used, but they were often several generations behind terrestrial chips in terms of performance. In 2026, the industry is moving toward "radiation-tolerant" cutting-edge chips. These use newer materials like Gallium Nitride (GaN) and advanced software-level error correction to achieve high performance while surviving the orbital environment.2. Thermal Management: The Vacuum Challenge

On Earth, data centers use fans or liquid cooling to move heat away from the chips and into the air or water. In the vacuum of space, there is no air to carry heat away. Heat must be moved through conduction and then radiated away into space using massive thermal radiators. Managing the heat of thousands of high-power GPUs in a compact satellite is one of the most difficult engineering challenges of Project Suncatcher and the SpaceX/xAI plan.3. Specialized AI Chips for Space

Companies like Starcloud and Loft Orbital are developing specialized AI processors designed specifically for the power and thermal constraints of space. These chips are optimized for "inference" (running the AI) rather than "training" (building the AI), allowing them to operate at high efficiency with minimal power draw. In 2026, these chips have reached a level where they are 100 times more powerful than any space-based computer from just five years ago.The Economics: Does the Math Add Up?

The ultimate question for the 2026 AI-in-space race is economic: Is it actually cheaper to run a data center in orbit than on Earth?

| Metric | Ground Data Center (2026) | Orbital Data Center (2026-2030) |

| Power Cost | $0.15 - $0.25 per kWh (Rising) | $0.00 (Solar) |

| Cooling Efficiency | 30-40% of total energy | High CapEx (Radiators) |

| Launch/CapEx | Low (Building/Land) | High (Starship Launch) |

| Data Transmission | Fiber (Cheap) | Laser/RF (Expensive/Limited) |

| Latency | 1-10ms | 100-500ms (Ground to Orbit) |

While the upfront cost (CapEx) of launching a data center is significantly higher than building one on Earth, the operational cost (OpEx) is dramatically lower due to free solar power. In the 2026 economy, where energy prices are a major driver of AI inflation, the "Energy Arbitrage" of space is becoming a powerful motivator. Elon Musk's prediction that space-based data centers will be the lowest cost within 2-3 years depends heavily on the continued success and scale of the Starship program, which has already reduced launch costs to under $100 per kilogram in 2026.

Timelines: When Will This Happen?

The race for space-based AI is moving through three distinct phases.Phase 1: The Demonstration Era (2025-2026)

This is where we are today. Companies are launching individual "edge compute" modules on existing satellites. AWS has successfully demonstrated its Snowcone device on the International Space Station (ISS), and SpaceX has integrated basic AI for autonomous Starlink maneuvering. These are small-scale tests designed to prove that modern chips can survive the radiation and thermal environment.Phase 2: The Prototype Constellations (2027-2028)

This is the next major milestone. Google's Project Suncatcher and the first pilot "data center satellites" from the SpaceX/xAI merger are scheduled for launch. These will be the first dedicated, high-performance AI hubs in orbit. They will test the ability to perform complex AI training and large-scale inference in space, as well as the efficiency of laser inter-satellite links for distributed computing.Phase 3: The Orbital Data Center Era (2030+)

By the end of the decade, we expect to see the first scaled orbital data centers. These will be massive constellations (potentially the "million-satellite" plan) that handle a significant portion of the world's AI workload. At this stage, the "Interplanetary Internet" will be a reality, with data centers in Earth orbit, lunar orbit, and eventually Mars orbit, providing the compute power needed for human expansion into the solar system.The Risks: Debris, Geopolitics, and Sustainability

Moving AI into space is not without its risks. The 2026 space environment is more crowded and contested than ever before.- Orbital Debris (Kessler Syndrome): The launch of millions of satellites for AI data centers significantly increases the risk of collisions. A single collision could trigger a chain reaction (Kessler Syndrome) that makes certain orbits unusable for generations.

- Space Sovereignty: Who owns the data in an orbital data center? If a satellite is owned by a US company but is currently over international waters or another country's territory, which laws apply? These geopolitical questions are currently being debated at the UN and the ITU.

- Environmental Impact: While orbital data centers save energy on Earth, the launch of thousands of rockets has its own environmental cost. The industry is under pressure to develop "green" propellants and ensure that satellites are designed for sustainable de-orbiting at the end of their life.

Deep Dive: The Evolution of Orbital Compute in 2026

To understand the 3,000-word scope of this guide, we must look at the historical context that led to the 2026 space-based AI landscape. For decades, the "Space Computer" was seen as a basic tool—a radiation-hardened processor that performed simple telemetry and control functions. However, the last five years have seen a radical shift. In 2026, the AI has been integrated into almost every professional interaction, from high-level strategic planning to daily communication. This "co-creation" environment has made the choice of archetype a critical mental model for professional survival.1. The Death of the "Standard Satellite"

The primary reason for the shift in 2026 is the realization that the standard satellite is no longer the default. Behavioral economists have long known that humans are "locked-in" to their traditional ways of working. This "lock-in effect" has suppressed productivity for years. However, by adopting one of the three archetypes, professionals are finding a way to unlock their potential while providing a massive benefit to their organizations. The archetype acts as a cognitive bridge, allowing humans and AI to work together in ways that were previously impossible.2. The Role of "Orbital-Specific" Training in 2026

In the 2026 corporate landscape, the "Training Era" has reached its peak. Companies are not just teaching their employees how to use AI; they are providing the technical and psychological infrastructure to manage the symbiosis. From AI-driven "archetype coaching" that predicts which mode is best for a specific project to automated "Frontier Alerts" that warn when a task is outside the AI's capabilities, these platforms have removed much of the friction that once made AI integration a nightmare. They ask: "Are you working harder, or are you working smarter with your archetype?"3. The "Hidden Costs" of Space-Based AI in 2026

In 2026, the cost of space-based AI is rarely just a loss of speed. From "innovation gaps" that have ballooned to 20% of a company's potential to high-end "talent churn" that occurs when top professionals are not given the tools to thrive, the "second half" of the AI cost is often hidden in the fine print. An archetype ensures you are using the AI in a way that is sustainable, ethical, and highly productive. It ensures you have the mental energy to handle the "jagged" parts of your work without breaking your budget of time and attention.Technical Deep Dive: Understanding the "Latency-Distance" Calculation

Beyond the psychological benefits, the choice of archetype is rooted in the mathematical reality of the "Latency-Distance" calculation. In 2026, with interest rates stabilized at a "higher for longer" level, the cost of not integrating AI is higher than ever.1. The "AI-First" Orbital Workflow as a Wealth Engine

If you have a project and you use a space-based AI approach, you are effectively "buying" a piece of your future freedom. If you delegate 50% of the task to a 5% "AI cost," you are saving 45% of your human energy. This energy can then be reinvested into high-level strategy or new-skilling. Over ten years, that 45% savings on a $200,000 professional salary is worth over $900,000 in productivity.2. The "Real" Cost of Falling Off the Frontier in 2026

If you use a space-based AI approach for a task that is outside the frontier today, you are likely hoping to catch the error. However, in 2026, the cost of an AI error (including reputational damage, legal liability, and rework) averages around $15,000 for a single project. If you adopt the right archetype, you are already "protected" against this risk. You will never need to pay for a "hallucination audit," and you are protected against any future AI failure. The archetype is your primary defense against the "frontier trap." It ensures you are in the best possible position from day one.Case Study: AI in Space in Action (2026 Edition)

To illustrate the potential of space-based AI, let's look at a hypothetical scenario for a young professional in 2026 named Sarah. Sarah has a high-stakes project for a global client and needs to deliver a comprehensive market analysis.- Scenario A (Traditional Workflow): Sarah performs the analysis herself, using basic software and traditional research methods. Her project takes 40 hours, and she is exhausted by the end. She has no time left for strategic thinking or client engagement. Three months later, a competitor delivers a more comprehensive analysis using AI, and Sarah loses the client. She has a solid reputation but a low-growth future.

- Scenario B (Space-Based AI Workflow): Sarah uses a Self-automator approach to build an agent that performs the initial data scrape and analysis using an orbital data center. She then uses a Cyborg mode to co-create the narrative and a Centaur mode to delegate the final formatting and visualization. Her project takes 10 hours, and she has 30 hours left for strategic client engagement. While she spent $500 on orbital AI tools, she is saving $3,000 in billable hours compared to Scenario A. In just 4 months, she has "saved" enough in time and reputation to secure a promotion and a significant bonus.

Final Checklist: How to Build Your "Orbital-Fluent" Habit

- Audit Your Current AI Usage: Before you start your day, know exactly which tasks are "inside" and "outside" the jagged frontier.

- Apply for "Orbital AI Coaching": Many companies in 2026 offer pre-approval for space-based AI coaching sessions. Have this ready.

- Check the AI's "Latency Score": Some models in 2026 are notorious for being overconfident. Ask your agent for a "certainty report" before accepting an output.

- Beware of "Orbital Fatigue": If you are using a fused co-creation mode, ensure you take breaks to maintain your critical distance.

- Set Your "Quality" Threshold: Decide on a maximum error rate (e.g., 1.0%) where the AI output still makes sense compared to human work.

- Celebrate the "Human Moment": Every time you add a unique human insight to an AI output, acknowledge that you have just "bought" a piece of your future professional sovereignty at a 3% rate of effort.

Conclusion

The race to move AI into space is the defining challenge of the 2026 economy. It is a race that combines the most advanced artificial intelligence with the most ambitious space exploration. Whether it is SpaceX's million-satellite plan, Google's Project Suncatcher, or AWS's edge compute strategy, the goal is the same: to move the jagged frontier forward while maintaining the human "spark" that gives work its meaning.As we look toward 2027, the boundaries between these technologies will likely continue to blur. We may see the rise of "Orbital-Automators" or "Space-Based-Cyborgs." But regardless of the terminology, the core lesson remains: in the age of AI, the most important skill is the ability to decide how you will work with the machine. Your orbital strategy is your identity in the 2026 economy—choose it wisely.

Frequently Asked Questions (FAQ)

1. Why is it better to have data centers in space?

Space offers two massive advantages: Infinite solar power and unlimited cooling potential (via radiation). Terrestrial data centers are currently hitting a power and space bottleneck that makes orbital compute an attractive, carbon-free alternative.2. How do you cool a data center in a vacuum?

In the absence of air, heat cannot be removed by fans. Instead, it must be conducted to massive thermal radiators that emit the heat as infrared radiation into the cold vacuum of space. This is one of the most difficult engineering challenges of the 2026 space race.3. Will space-based AI replace ground-based data centers?

No. Space-based AI will likely complement ground-based centers. Terrestrial centers will continue to handle latency-critical tasks for ground users, while orbital centers will handle high-volume processing, AI training, and autonomous space operations.4. What is Google's Project Suncatcher?

Project Suncatcher is Google's initiative to build a network of solar-powered satellites that serve as dedicated AI infrastructure hubs. It focuses on using the constant solar energy of higher orbits to power the next generation of Google's AI models.5. How does radiation affect AI chips in space?

Radiation can cause "bit flips" (Single Event Upsets) or permanent damage to silicon. In 2026, the industry uses radiation-tolerant chips combined with advanced software-level error correction to ensure that AI models can run reliably in the harsh orbital environment.External References and Resources

SpaceX: Merger with xAI and FCC Million-Satellite Filing (Feb 2026), Google Research: Exploring a Space-Based, Scalable AI Infrastructure System Design, ABI Research: Top 7 Space Technology Trends to Know in 2026, MIT Technology Review: Four Things We'd Need to Put Data Centers in Space, IEEE Spectrum: Can Orbital Data Centers Solve AI's Power Crisis?

0 Comments Comments